The Operating System Every Data Engineering Leader Needs

A practical system for managing data engineering teams that actually deliver

This article is part of the Build & Lead Data Teams playlist. Click here to explore the full series.

Reading time: 17 minutes

Nobody teaches you how to manage a data engineering team. You get promoted because you were good at the technical work, handed a calendar full of meetings, and expected to figure the rest out. Most people never do.

Less than half of data and analytics teams effectively deliver business value to their organizations, according to Gartner.

Not because data engineers lack skill. Because the leaders above them have no system for turning that skill into consistent delivery.

Why Managing Data Engineering Teams Is Hard

The skills that got you promoted are the wrong skills for the job you now have

You spent years mastering pipelines, architecture, and debugging. Those skills made you visible. They also made you exactly the wrong candidate for what leadership actually requires.

Technical skill is individual, but leadership is organizational. The moment you become responsible for a team’s output rather than your own, you need a completely different set of tools.

Nobody in data engineering ever gets taught what those tools are.

The instinct most new leaders follow is to stay close to the technical work. It feels productive, because you can add visible value there. What you are actually doing is avoiding the harder, less legible work of building a functioning team.

Most data engineering teams run on luck

85% of big data projects fail, and the primary causes are not technical. They are misalignment, poor communication, and leadership that cannot bridge the gap between what engineers build and what the business needs.

Your team probably looks fine from the outside. People are busy, work is moving. Nobody is visibly on fire. What you cannot see without a system:

Who is blocked but not saying it

Which project is slipping its deadline

Whose work is duplicating someone else’s

What the team thinks is a priority versus what actually is

By the time those things become visible, you are already behind.

The consequence of no system is invisible, until it isn’t

Failure in data engineering leadership accumulates.

A blocker sits for four days because nobody asked the right question. A project drifts because the goal was never written down. Someone burns out because you had no signal they were underwater. A stakeholder loses confidence because the same incident happened twice without any visible response.

Only 48% of digital initiatives meet or exceed their outcome targets globally. The organizations that hit 71% success rates share one thing: co-owned delivery with clear accountability structures between technical and business leadership. Not better engineers. Better operating infrastructure.

You need a system. Here it is (download the templates at end of the article).

This publication is not about tools.

It is about operating as a data professional in a world that has no idea what you do or why it matters.

The Operating System

The OS has two layers:

Rhythm is the recurring rituals that create a shared cadence, so everyone knows what is happening, when, and what is expected.

Memory is the processes that capture information so the team is not dependent on any individual’s recall.

Most leaders have a broken version of the rhythm layer. Almost nobody has the memory layer. That gap is where teams fail.

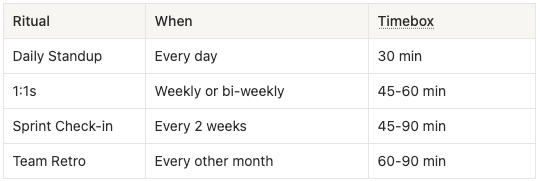

The four rituals that run a high-performing data engineering team

Each ritual has a specific job, and when you know what that job is, you stop letting them bleed into each other.

Standups stop turning into planning sessions.

1:1s stop being status updates with one person.

Check-ins stop being the meeting where people vent and nothing changes.

Retros stop being a fake appraisal meetings.

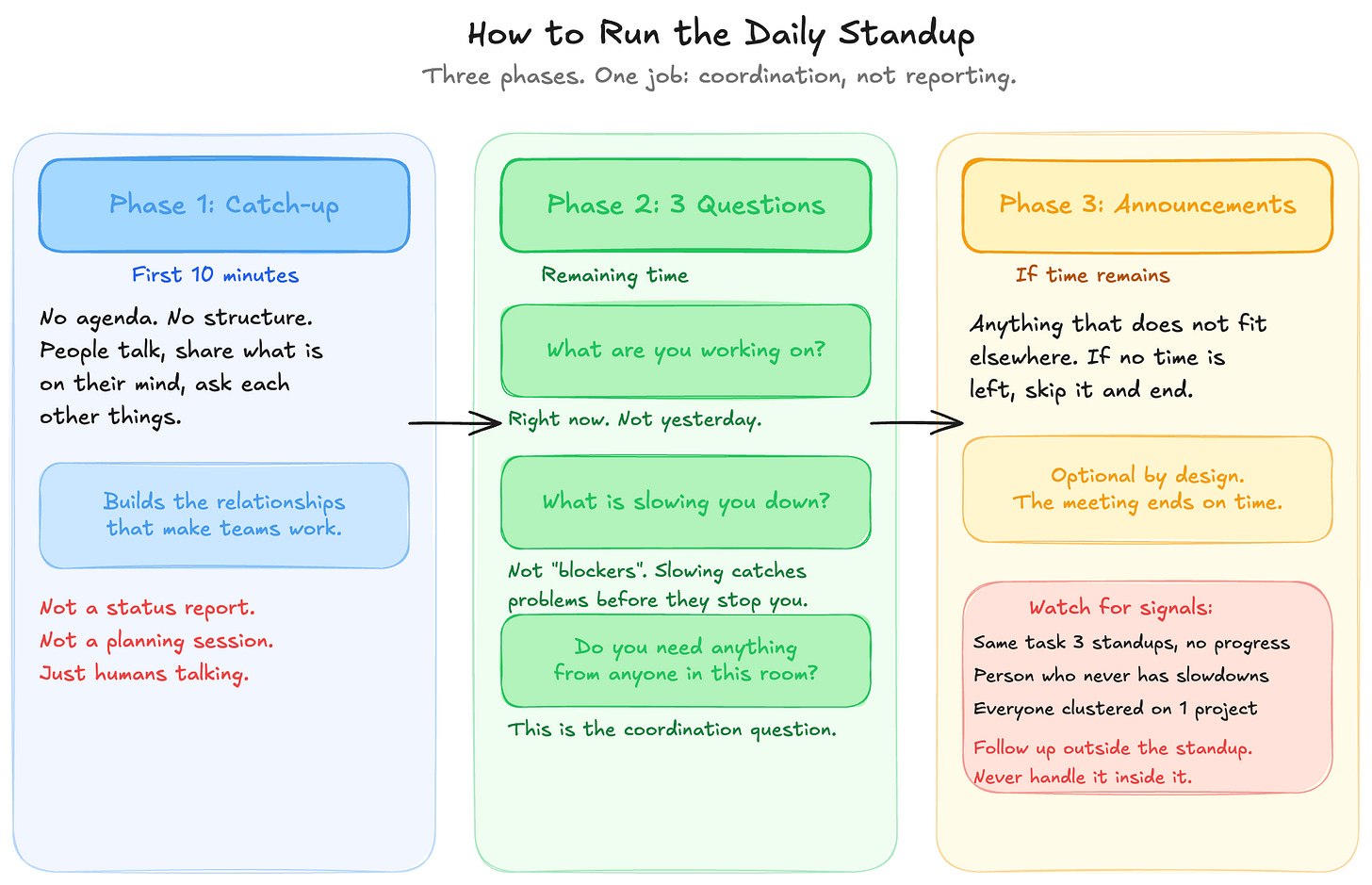

The Daily Standup

Most standups are not a ritual

Your standup probably goes like this: everyone says what they did yesterday, what they are doing today, whether they have blockers. People say no blockers. The meeting runs 45 minutes. Nobody learned anything useful.

You keep running it this way because it feels like structure. It seems to work.

It does not work. It is a reporting session disguised as a coordination ritual. And the reason blockers never surface is that you are asking a binary question about blockers. But things slow you down long before they stop you completely.

Run the standup in three phases

Phase 1: Catch-up (first 10 minutes). No agenda. People talk, share what is on their mind, ask each other things. Research on high-performing data teams consistently identifies strong cross-functional relationships as a differentiating factor. Those relationships do not build inside structured agendas.

Phase 2: The three questions (remaining time). Every person answers:

What are you currently working on? Not yesterday or tomorrow. Right now.

Is anything slowing you down? Not “do you have blockers“. Slowing you down catches problems before they become stops.

Do you need anything from anyone in this room? This is the question that makes the standup a coordination tool instead of a report.

Phase 3: Announcements (if time remains). Anything that does not fit elsewhere. If there is no time, skip it and end.

What to watch for

The standup tells you more through silence than through words. Watch for:

The same task appearing three standups in a row without progress

The person who never has anything slowing them down

Everyone’s answers clustering around the same project for days

Each of those is a signal that a conversation needs to happen outside the standup. Never handle it inside it. The standup is not a debugging session for individual blockers. It is a radar sweep. You note what looks wrong and follow up separately.

The standup is not your briefing

If you are using the standup to find out what your team is working on, your system has a gap somewhere else. By the time your standup starts, you should already know.

The standup confirms what you know and surfaces what needs attention, it does not reveal your team’s work to you for the first time.

The 1:1

Most 1:1s are status updates with one person

You meet

You ask what they are working on

They tell you

You talk about a project

You schedule the next one

That is a meeting that could have been a Slack message and would have been better as three bullet points.

You keep running it this way because it feels efficient. What is actually happening is that you are using the highest-leverage ritual in your calendar to do the lowest-leverage thing possible.

70% of the variance in employee engagement is attributable to the quality of management. The 1:1 is the primary channel through which that quality gets expressed, or wasted.

Structure the 1:1 so the engineer sets the agenda, not the manager

The 1:1 runs in three parts:

Their agenda (first 10-15 min): Ask “what do you want to cover today?“ then stop talking. If they have nothing, that is the most important signal in the room.

Your agenda (next 20-30 min): Feedback collected since the last 1:1. Career conversations. Tensions you observed. Things that have no home in any other ritual.

The close (last 5-10 min): Two or three things most important for them to focus on before you meet again. Anything you committed to follow up on.

Silence in a 1:1 is the most important data point

When someone has nothing for their agenda, there are two explanations:

They are heads-down and genuinely fine

They have learned that bringing things up here does not lead anywhere

Your job is to figure out which one it is. Ask directly: “Is there anything you have been sitting on that you have not brought up?“ Most people will tell you if you actually ask.

Forgetting what you committed to is a management failure

Every commitment you make in a 1:1 and forget by the next one erodes trust. The person noticed. They always notice.

Keep notes after every 1:1. Just two or three sentences: what was discussed, what you committed to, what to follow up on. This is the difference between a leader who has a relationship with each person on their team and one who is starting from scratch every two weeks.

By 2028, one in four regretted staff attritions will be attributable to managers’ lack of data literacy. Employees leaving not because they lack skills, but because their managers cannot develop or support them. The 1:1 is where that problem either gets caught early or quietly compounds into a resignation.

You already know the problem. You have known it for months.

The gap between "knowing what to do" and "doing it" is just a decision. Inside the paid tier you get the frameworks, scripts, and templates I used to build my career over 16 years. Field-tested stuff!

The Sprint Check-in

Skipping the sprint check-in is why your delivery keeps drifting

Most data engineering leaders skip this ritual. They have standups and 1:1s. But because you have no prioritization process and put fires all the time, you never really need a planning meeting.

The standup is too short for sprint-level visibility. The 1:1 covers one person at a time. The sprint check-in is the only ritual where the whole team looks at the same picture together and asks whether it is accurate.

Without it, delivery drift is invisible until it becomes a missed deadline.

Review delivery honestly

The sprint check-in runs in three parts:

Delivery review.

What was the sprint goal?

What got done?

What did not?

For everything that did not land, the question is not who is responsible. The question is “what did you not understand when you planned this?”. Estimation fails because of missing information. Naming what was missing is the only way to plan better next sprint.

Blocker review. Not individual blockers that got resolved, but patterns. If access issues slowed three engineers this sprint, that is a systemic problem someone needs to own.

Research on data team performance specifically identifies shared accountability for outcomes over reactive firefighting as a behavior that distinguishes high-performing teams. The blocker review is where that distinction gets made real.

Next sprint preview. Ten minutes of context before planning starts.

What matters most in the next two weeks?

What is the business reason?

What does the team need to know that will change how they work?

This is where you close the gap between engineers who are executing tasks and engineers who understand why those tasks exist.

Patterns you refuse to name in the check-in become permanent

The temptation in every check-in is to stay positive. Things mostly went well. The miss was a one-off. You will do better next sprint.

That refusal to name patterns is how recurring problems become permanent friction. The same root cause surfaces every two weeks. Everyone knows it. Nobody says it out loud.

Nothing changes.

Say it out loud. Assign it. Fix it or decide you are not going to fix it. Either is better than pretending it is not there.

The Team Retro

Most retros fail because they produce catharsis, but not change

People raise the same things retro after retro. Requirements come in incomplete. Estimation is always off. Two people are still not communicating clearly. And nothing is different.

Eventually, people stop raising real issues. You have trained them that feedback is a ritual, not a mechanism.

You keep running retros this way because producing a long list of observations feels productive.

It is not. A list with no owner, no timeline, and no definition of done is documentation of complaints.

One change per retro, not a wishlist

Run the retro in three columns:

What went well (5 min). Round-robin, read aloud, group themes. Skip this column and the team starts feeling like everything is broken.

What could be better (15 min). Push for specificity. “Communication is bad“ is not a problem. “I found out the pipeline broke the downstream model when three stakeholders were already angry“ is a problem.

What we are doing about it (remaining time). One thing per owner. One definition of done.

That constraint is the entire point. One change per retro means it actually happens. Three changes means two of them disappear by Tuesday.

Protect the third column

The reason most retros fail is that the first two columns consume almost all the time. The third column gets five minutes of tired people picking something vague before everyone has to leave for the next meeting.

Timebox the first two. Hard stop. The third column is the only reason you are in the meeting.

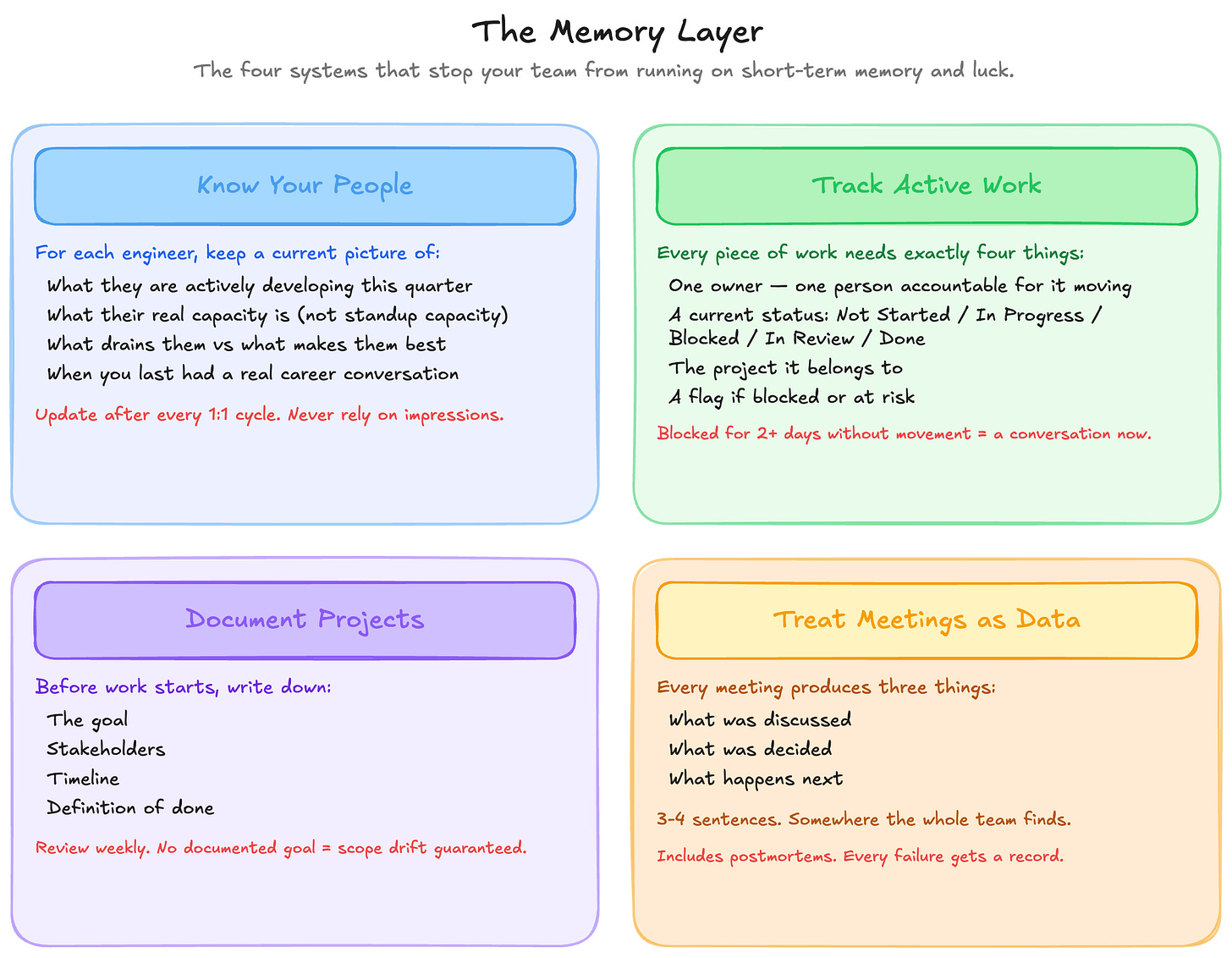

The Memory Layer

Your team runs on short-term memory and it will eventually cost you

Most data engineering teams capture almost nothing. Work moves through people’s heads, conversations happen in Slack and evaporate, decisions get made and their reasoning is never recorded.

This looks fine until someone is out sick for a week, or a senior engineer leaves, or a stakeholder asks why a pipeline works the way it does and the only person who knows has not worked there for two years.

Silos of knowledge, where critical information exists only in individual heads, are one of the four core failure modes of data science teams, alongside deployment friction, tool mismatches, and unmonitored models. The fix is building the habit of capturing information as a team practice.

Know your people beyond what they are working on

Before you can lead someone well, you need to know them. Not their job title or years of experience. Who they actually are as an engineer and where they are trying to go.

For each person on your team, you need a current, accurate picture of:

What they are actively developing this quarter

What their current capacity actually is, not what it looks like in the standup

What kind of work drains them versus what makes them do their best work

When you last had a real career conversation with them

Research on team development identifies consistent failure patterns in poor management: waiting too long to address issues, and treating development as one-size-fits-all. Both failures come from the same root cause:

The manager does not have an accurate, current picture of each person.

Update what you know about each person after every 1:1 cycle. The leaders who skip this are running their team on impressions. They are surprised by resignations that should not have been surprising.

Track active work so the standup confirms what you already know

Every piece of active work needs four things:

One owner. Not a team, not a pair, one person accountable for it moving

A current status - Not Started, In Progress, Blocked, In Review, Done

The project it belongs to

A flag if it is blocked or at risk

That is the whole system. Five status states. One owner. Two flags.

The hardest part is getting the team to maintain it. If you are updating the task list yourself, you have taken ownership of something that belongs to your engineers. Your job is to check it, ask about what looks wrong, and make updates non-negotiable.

Watch the Blocked status most closely. Anything sitting there for more than two days without movement is a conversation you need to have before the next standup.

Document projects so decisions survive the people who made them

Every significant project needs a goal, a set of stakeholders, a timeline, and a definition of done, written down before the work starts.

When a project does not have a documented goal, the first thing that happens when it hits a snag is that everyone has a different idea of what success was supposed to look like. Scope drifts. Decisions get made by whoever speaks loudest. The timeline slips in ways nobody can explain because nobody wrote down what was originally intended.

Only 48% of digital initiatives meet their outcome targets globally. The organizations that hit 71% share one characteristic: success was defined and co-owned before delivery started, not reconstructed after it missed.

Review active projects weekly. Look for what is at risk and what has not moved. Both are signals that need attention before they become announcements in a stakeholder meeting.

Stop collecting advice. Start operating differently.

I share the exact playbooks that helped me become Head of Data, negotiate a 40% raise, and survive 4 M&A transactions. Paid subscribers use them to get promoted.

Treat meetings as data

Every meeting your team has should produce three things:

what was discussed

what was decided

what happens next

Write three or four sentences somewhere the whole team can find them.

The reason is pattern recognition.

When the same problem surfaces in sprint three that surfaced in sprint one, you want to know whether you discussed it before. When a retro action disappears without anyone noticing, the record shows it was assigned. When a stakeholder claims a decision was never made, you have the date it was.

High-performing data teams consistently maintain visible decision logs and documented assumptions. Most teams treat meetings as ephemeral. The teams that learn fastest treat them as the raw material for every difficult conversation they will eventually need to have.

Run postmortems to learn from failures, not to survive them

Things will break. Pipelines will fail. Data will be wrong. Incidents will happen.

The question is not whether. It is whether anything changes because of it.

A postmortem is a structured conversation after a failure:

What happened

Why it happened at the system level

What the fix was

What changes prevent the same failure recurring

Blameless postmortems are not a kindness to your engineers. They are the only way to get accurate information. When people fear blame, they tell you a version of events that protects them. You treat a symptom while the root cause stays intact and the same incident happens again six weeks later.

Unmonitored systems and processes without structured review are a core failure mode of data science teams, and the damage compounds. Each unresolved failure erodes stakeholder confidence and makes the next investment request harder to justify.

Over time, the postmortem record becomes a knowledge base:

why things work the way they do

where the structural fragility is

what has been tried before

Most data engineering teams treat incidents as things to get through. The teams that improve treat them as information.

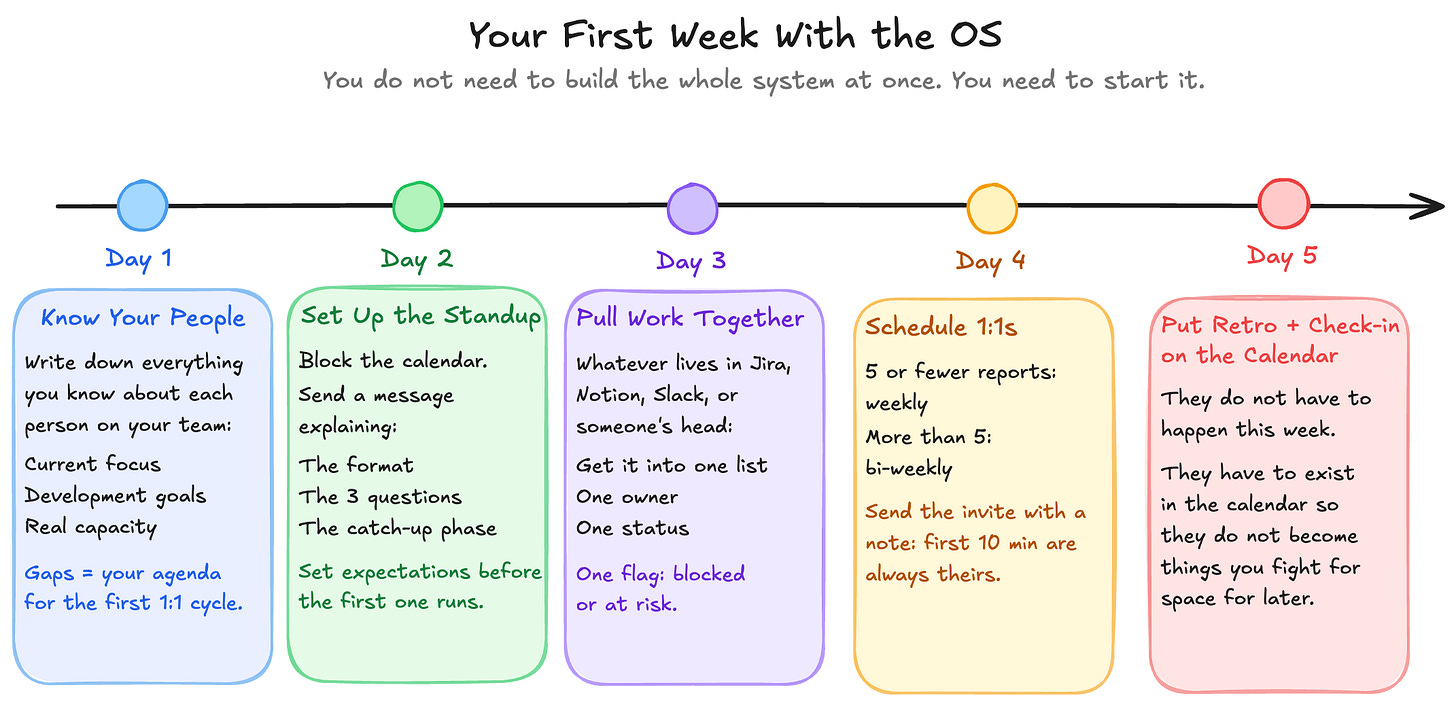

Your First Week With the OS

You do not need to build the whole system at once. You need to start it.

Day 1: Know your people. Write down everything you know about each person: current focus, development goals, capacity. The gaps you find are your agenda for the first 1:1 cycle.

Day 2: Set up the standup. Do not just block the calendar. Send a message explaining the format, the three questions, the catch-up phase. Set expectations before the first one runs.

Day 3: Pull active work into one place. Whatever lives in Jira, Notion, Slack, or someone’s head, get it into a single list with one owner, one status, one flag for blocked or at risk.

Day 4: Schedule 1:1s. Five or fewer reports: weekly. More than that: bi-weekly. Send the invite with a short note about what you want to cover in the first one, and that the first ten minutes are always theirs.

Day 5: Put the sprint check-in and retro on the calendar. They do not have to happen this week. They have to exist in the calendar so they do not become things you fight for space for later.

The goal is that next Monday looks different from last Monday.

What Changes When You Have an OS

You stop being surprised by things that were always visible

The most significant change is timing.

When work is tracked, when standups surface blockers, when projects have documented goals and weekly reviews, you see problems coming.

Not always early enough to prevent them, but hopefully, early enough to respond before they escalate.

The shift from reactive to proactive is the one that changes what leading a team actually feels like.

Your engineers stop carrying problems alone

One of the most expensive things that happens in data engineering teams is invisible load: Someone is stuck. They do not want to admit it. They sit on it for three days. By the time it surfaces, a week is gone and the sprint is already at risk.

Rituals designed to surface that load. People stop carrying things alone because the system makes it normal to say when something is wrong.

Your most difficult conversations get easier

Performance reviews stop being stressful when you have 1:1 notes instead of six months of impressions. Reliability conversations with stakeholders have postmortems behind them. Headcount requests have project history and capacity data to support them.

48% of HR leaders agree the demand for new skills is evolving faster than existing talent structures can support, which means every conversation about team development and investment is becoming more important, not less.

The leaders who can back those conversations with evidence have more credibility and get more resources. The ones running on instinct get told to come back with data.

You are a data person. Come with data.

I built the resource library I wish existed when I was 25 years old.

Career scripts. Business translation templates. Stakeholder playbooks. Meeting frameworks.

Every single one came from real situations, real mistakes, and real results. Paid members get the whole thing.

Get the Full System

Everything in this article is built into a Notion template you can copy and use today. All five components:

team tracking

active work

project management

meeting records

postmortems

Everything is pre-configured with the structures, properties, and templates that match what you read above.

Paid subscribers get the Operating System for free here